The Michigan State Police (MSP) has acquired software that will allow the law enforcement agency to “help predict violence and unrest,” according to a story published by The Intercept.

I could not help but be reminded of the film Minority Report. In that film three exceptionally talented psychics are used to predict crimes before they happen and apprehend the would-be perpetrators. These not-yet perpetrators are guilty of what is called “pre-crime,” and they are sentenced to live in a very nice virtual reality where they will not be able to hurt others.

The public’s acceptance of the fictional pre-crime system is based on good numbers: It has eliminated all pre-meditated murders for the past six years in Washington, D.C. where it has been implemented. Which goes to prove—fictionally, of course—that if you lock up enough people, even ones who have never committed a crime, crime will go down.

How does the MSP software work? Let me quote again from The Intercept:

The software, put out by a Wyoming company called ShadowDragon, allows police to suck in data from social media and other internet sources, including Amazon, dating apps, and the dark web, so they can identify persons of interest and map out their networks during investigations. By providing powerful searches of more than 120 different online platforms and a decade’s worth of archives, the company claims to speed up profiling work from months to minutes.

Simply reclassify all of your online “friends,” “connections” and “followers” as accomplices and you’ll start to get a feel for what this software and other pieces of software mentioned in the article can do.

The ShadowDragon software in concert with other similar platforms and companion software begins to look like what the article calls “algorithmic crime fighting.” Here is the main problem with this type of thinking about crime fighting and the general hoopla over artificial intelligence (AI): Both assume that human behavior and experience can be captured in lines of computer code. In fact, at their most audacious, the biggest boosters of AI claim that it can and will learn the way humans learn and exceed our capabilities.

Now, computers do already exceed humans in certain ways. They are much faster at calculations and can do very complex ones far more quickly than humans can working with pencil and paper or even a calculator. Also, computers and their machine and robotic extensions don’t get tired. They can do often complex repetitive tasks with extraordinary accuracy and speed.

What they cannot do is exhibit the totality of how humans experience and interpret the world. And, this is precisely because that experience cannot be translated into lines of code. In fact, characterizing human experience is such a vast and various endeavor that it fills libraries across the world with literature, history, philosophy and the sciences (biology, chemistry and physics) using the far more subtle construct of natural language—and still we are nowhere near done describing the human experience.

It is the imprecision of natural language which makes it useful. It constantly connotes rather that merely denotes. With every word and sentence it offers many associations. The best language opens paths of discovery rather than closing them. Natural language is both a product of us humans and of our surroundings. It is a cooperative, open-ended system.

And yet, natural language and its far more limited subset, computer code, are not reality, but only a faint representation of it. As the father of general semantics, Alfred Korzybski, so aptly put it, “The map is not the territory.”

Apart from the obvious dangers of the MSP’s “algorithmic crime fighting” such as racial and ethnic profiling and gender bias, there is the difficulty in explaining why information picked up by the algorithm is relevant to a case. If there is human intervention to determine relevance, then that moves the system away from the algorithm.

But it is the act of hoovering up so much irrelevant information that risks the possibility of creating a pattern that is compelling and seemingly real, but which may just be an artifact of having so much data. This becomes all the more troublesome when law enforcement is trying to “predict” unrest and crimes—something which the MSP says it doesn’t do even though its systems have that capability.

The temptation will grow to use such systems to create better “order” in society by focusing on the “troublemakers” identified by these systems. Societies have always done some form of that through their institutions of policing and adjudication. Now, companies seeking to profit from their ability to “find” the “unruly” elements of society will have every incentive to write algorithms that show the “troublemakers” to be a larger segment of society than we ever thought before.

We are being put on the same road in our policing and courts that we’ve just traverse in the so-called “War on Terror” that has killed a lot of innocent people and made a large number of defense and security contractors rich, but which has left us with a world that is arguably more unsafe than it was before.

To err is human. But to correct is also human, especially based on intangibles—intuitions, hunches, glimpses of perception—which give us humans a unique ability to see beyond the algorthmically defined “facts” and even beyond those facts presented to our senses in the conventional way. When a machine fails—not in a trivial way that merely fails to check and correct data—but in a fundamental way that miscontrues the situation, it has no unconscious or intuitive mind to sense that something is wrong. The AI specialists have a term for this. They say that the machine lacks “common sense.”

The AI boosters will respond, of course, that humans can remain in the loop. But to admit this is to admit that the future of AI is much more limited than portrayed and that as with any tool, its usefulness all depends on how the tool is used and who is using it.

It is worth noting that the title of the film mentioned at the outset, Minority Report, refers to a disagreement among the psychics, that is, one of them issues a “minority report” which conflicts with the others. It turns out that for the characters in this movie the future isn’t so clear after all, even to the sensitive minds of the psychics.

Nothing is so clear and certain in the future or even in the present that we can allay all doubts. And, when it comes to determining what is actually going on, context is everything. But no amount of data mining will provide us with the true context in which the subject of an algorithmic inquiry lives. For that we need people. And, even then the knowledge of the authorities will be partial.

If only the makers of this software would insert a disclaimer in every report saying that users should look upon the information provided with skepticism and thoroughly interrogate it. But then, how many suites of software would these software makers sell with that caveat prominently displayed on their products?

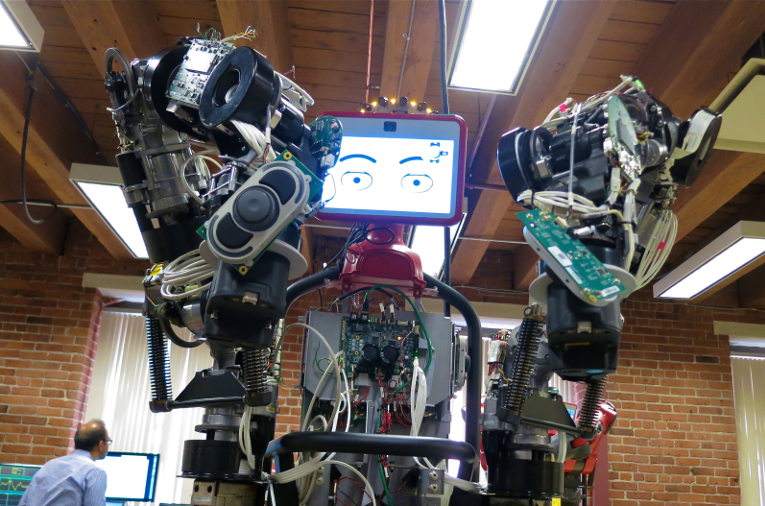

“Roughed up by Robocop” – disassembled robot. (2013) by Steve Jurvetson. via Wikimedia Commons https://commons.wikimedia.org/wiki/File:Roughed_up_by_Robocop_(9687272347).jpg